Bumps [transformers](https://github.com/huggingface/transformers) from 4.36.2 to 4.37.0. <details> <summary>Release notes</summary> <p><em>Sourced from <a href="https://github.com/huggingface/transformers/releases">transformers's releases</a>.</em></p> <blockquote> <h2>v4.37 Qwen2, Phi-2, SigLIP, ViP-LLaVA, Fast2SpeechConformer, 4-bit serialization, Whisper longform generation</h2> <h2>Model releases</h2> <h3>Qwen2</h3> <p>Qwen2 is the new model series of large language models from the Qwen team. Previously, the Qwen series was released, including Qwen-72B, Qwen-1.8B, Qwen-VL, Qwen-Audio, etc.</p> <p>Qwen2 is a language model series including decoder language models of different model sizes. For each size, we release the base language model and the aligned chat model. It is based on the Transformer architecture with SwiGLU activation, attention QKV bias, group query attention, mixture of sliding window attention and full attention, etc. Additionally, we have an improved tokenizer adaptive to multiple natural languages and codes.</p> <ul> <li>Add qwen2 by <a href="https://github.com/JustinLin610"><code>@JustinLin610</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/28436">#28436</a></li> </ul> <h3>Phi-2</h3> <p>Phi-2 is a transformer language model trained by Microsoft with exceptionally strong performance for its small size of 2.7 billion parameters. It was previously available as a custom code model, but has now been fully integrated into transformers.</p> <ul> <li>[Phi2] Add support for phi2 models by <a href="https://github.com/susnato"><code>@susnato</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/28211">#28211</a></li> <li>[Phi] Extend implementation to use GQA/MQA. by <a href="https://github.com/gugarosa"><code>@gugarosa</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/28163">#28163</a></li> <li>update docs to add the <code>phi-2</code> example by <a href="https://github.com/susnato"><code>@susnato</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/28392">#28392</a></li> <li>Fixes default value of <code>softmax_scale</code> in <code>PhiFlashAttention2</code>. by <a href="https://github.com/gugarosa"><code>@gugarosa</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/28537">#28537</a></li> </ul> <h3>SigLIP</h3> <p>The SigLIP model was proposed in Sigmoid Loss for Language Image Pre-Training by Xiaohua Zhai, Basil Mustafa, Alexander Kolesnikov, Lucas Beyer. SigLIP proposes to replace the loss function used in CLIP by a simple pairwise sigmoid loss. This results in better performance in terms of zero-shot classification accuracy on ImageNet.</p> <ul> <li>Add SigLIP by <a href="https://github.com/NielsRogge"><code>@NielsRogge</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/26522">#26522</a></li> <li>[SigLIP] Don't pad by default by <a href="https://github.com/NielsRogge"><code>@NielsRogge</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/28578">#28578</a></li> </ul> <h3>ViP-LLaVA</h3> <p>The VipLlava model was proposed in Making Large Multimodal Models Understand Arbitrary Visual Prompts by Mu Cai, Haotian Liu, Siva Karthik Mustikovela, Gregory P. Meyer, Yuning Chai, Dennis Park, Yong Jae Lee.</p> <p>VipLlava enhances the training protocol of Llava by marking images and interact with the model using natural cues like a “red bounding box” or “pointed arrow” during training.</p> <ul> <li>Adds VIP-llava to transformers by <a href="https://github.com/younesbelkada"><code>@younesbelkada</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/27932">#27932</a></li> <li>Fix Vip-llava docs by <a href="https://github.com/younesbelkada"><code>@younesbelkada</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/28085">#28085</a></li> </ul> <h3>FastSpeech2Conformer</h3> <p>The FastSpeech2Conformer model was proposed with the paper Recent Developments On Espnet Toolkit Boosted By Conformer by Pengcheng Guo, Florian Boyer, Xuankai Chang, Tomoki Hayashi, Yosuke Higuchi, Hirofumi Inaguma, Naoyuki Kamo, Chenda Li, Daniel Garcia-Romero, Jiatong Shi, Jing Shi, Shinji Watanabe, Kun Wei, Wangyou Zhang, and Yuekai Zhang.</p> <p>FastSpeech 2 is a non-autoregressive model for text-to-speech (TTS) synthesis, which develops upon FastSpeech, showing improvements in training speed, inference speed and voice quality. It consists of a variance adapter; duration, energy and pitch predictor and waveform and mel-spectrogram decoder.</p> <ul> <li>Add FastSpeech2Conformer by <a href="https://github.com/connor-henderson"><code>@connor-henderson</code></a> in <a href="https://redirect.github.com/huggingface/transformers/issues/23439">#23439</a></li> </ul> <h3>Wav2Vec2-BERT</h3> <p>The Wav2Vec2-BERT model was proposed in Seamless: Multilingual Expressive and Streaming Speech Translation by the Seamless Communication team from Meta AI.</p> <p>This model was pre-trained on 4.5M hours of unlabeled audio data covering more than 143 languages. It requires finetuning to be used for downstream tasks such as Automatic Speech Recognition (ASR), or Audio Classification.</p> <!-- raw HTML omitted --> </blockquote> <p>... (truncated)</p> </details> <details> <summary>Commits</summary> <ul> <li><a href="https://github.com/huggingface/transformers/commit/8e3e145b427196e014f37aa42ba890b9bc94275e"><code>8e3e145</code></a> [<code>GPTNeoX</code>] Fix BC issue with 4.36 (<a href="https://redirect.github.com/huggingface/transformers/issues/28602">#28602</a>)</li> <li><a href="https://github.com/huggingface/transformers/commit/344943b88a1df08b797721f600ab826371029a4a"><code>344943b</code></a> Fix <code>_speculative_sampling</code> implementation (<a href="https://redirect.github.com/huggingface/transformers/issues/28508">#28508</a>)</li> <li><a href="https://github.com/huggingface/transformers/commit/5fc3e60cd8824b4bcf31e1c51f5c29a83e277be1"><code>5fc3e60</code></a> [SigLIP] Don't pad by default (<a href="https://redirect.github.com/huggingface/transformers/issues/28578">#28578</a>)</li> <li><a href="https://github.com/huggingface/transformers/commit/5ee9fcb5cc7c6684d79e02e9d76be045373786f7"><code>5ee9fcb</code></a> Fix wrong xpu device in DistributedType.MULTI_XPU mode (<a href="https://redirect.github.com/huggingface/transformers/issues/28386">#28386</a>)</li> <li><a href="https://github.com/huggingface/transformers/commit/e156abd05a9910106351edaa4fa856c5ba93a0b5"><code>e156abd</code></a> [Whisper] Finalize batched SOTA long-form generation (<a href="https://redirect.github.com/huggingface/transformers/issues/27658">#27658</a>)</li> <li><a href="https://github.com/huggingface/transformers/commit/a485e469f62e5eaa55e3e6b786a573a3b939e30c"><code>a485e46</code></a> Add w2v2bert to pipeline (<a href="https://redirect.github.com/huggingface/transformers/issues/28585">#28585</a>)</li> <li><a href="https://github.com/huggingface/transformers/commit/d381d85466db4b6e998212d9788e91d201792355"><code>d381d85</code></a> Release: v4.37.0</li> <li><a href="https://github.com/huggingface/transformers/commit/db9a7e9d3dbd1b595f004597a0502cce0a96135a"><code>db9a7e9</code></a> Don't save <code>processor_config.json</code> if a processor has no extra attribute (<a href="https://redirect.github.com/huggingface/transformers/issues/2">#2</a>...</li> <li><a href="https://github.com/huggingface/transformers/commit/772307be7649e1333a933cfaa229dc0dec2fd331"><code>772307b</code></a> Making CTC training example more general (<a href="https://redirect.github.com/huggingface/transformers/issues/28582">#28582</a>)</li> <li><a href="https://github.com/huggingface/transformers/commit/186aa6befecc6e6f022fed34019a00d60884d557"><code>186aa6b</code></a> [Whisper] Fix audio classification with weighted layer sum (<a href="https://redirect.github.com/huggingface/transformers/issues/28563">#28563</a>)</li> <li>Additional commits viewable in <a href="https://github.com/huggingface/transformers/compare/v4.36.2...v4.37.0">compare view</a></li> </ul> </details> <br /> [](https://docs.github.com/en/github/managing-security-vulnerabilities/about-dependabot-security-updates#about-compatibility-scores) Dependabot will resolve any conflicts with this PR as long as you don't alter it yourself. You can also trigger a rebase manually by commenting `@dependabot rebase`. [//]: # (dependabot-automerge-start) [//]: # (dependabot-automerge-end) --- <details> <summary>Dependabot commands and options</summary> <br /> You can trigger Dependabot actions by commenting on this PR: - `@dependabot rebase` will rebase this PR - `@dependabot recreate` will recreate this PR, overwriting any edits that have been made to it - `@dependabot merge` will merge this PR after your CI passes on it - `@dependabot squash and merge` will squash and merge this PR after your CI passes on it - `@dependabot cancel merge` will cancel a previously requested merge and block automerging - `@dependabot reopen` will reopen this PR if it is closed - `@dependabot close` will close this PR and stop Dependabot recreating it. You can achieve the same result by closing it manually - `@dependabot show <dependency name> ignore conditions` will show all of the ignore conditions of the specified dependency - `@dependabot ignore this major version` will close this PR and stop Dependabot creating any more for this major version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this minor version` will close this PR and stop Dependabot creating any more for this minor version (unless you reopen the PR or upgrade to it yourself) - `@dependabot ignore this dependency` will close this PR and stop Dependabot creating any more for this dependency (unless you reopen the PR or upgrade to it yourself) </details>

README.md of the aki_prj23_transparenzregister

Contributions

See the CONTRIBUTING.md about how code should be formatted and what kind of rules we set ourselves.

Defined entrypoints

The project has currently the following entrypoint available:

- data-transformation > Transfers all the data from the mongodb into the sql db to make it available as production data.

- data-processing > Processes the data using NLP methods and transfers matched data into the SQL table ready for use.

- reset-sql > Resets all sql tables in the connected db.

- copy-sql > Copys the content of a db to another db.

- webserver > Starts the webserver showing the analysis results.

- find-missing-companies > Retrieves meta information of companies referenced by others but not yet part of the dataset.

- ingest > Scheduled data ingestion of news articles as well as missing companies and financial data.

All entrypoints support the -h argument that shows a short help text.

Applikation startup

Central Build

The packages / container built by GitHub are accessible for users that are logged into the GitHub Container Registry (GHCR) with a Personal Access token via Docker.

Run docker login ghcr.io to start that process. The complete docs on logging in can be found here.

The application can than be simply started with docker compose up --pull.

Please note that some configuration with a .env is necessary.

Local Build

The application can be locally build by starting the rebuild-and-start.bat, if poetry and docker-compose is installed.

This will build a current *.whl and build the Docker container locally.

The configuration that start like this is the local-docker-compose.yaml.

Please note that some configuration with a .env is necessary.

Application Settings

Docker configuration / Environmental-Variables

The current design of this application suggests that it is started inside a docker-compose configuration.

For docker-compose this is commonly done by providing a .env file in the root folder.

To use the environmental configuration start an application part with the ENV argument (webserver ENV).

# Defines the container registry used. Default: "ghcr.io/fhswf/aki_prj23_transparenzregister"

CR=ghcr.io/fhswf/aki_prj23_transparenzregister

# main is the tag the main branch is taged with and is currently in use

TAG=latest

# Configures the access port for the webserver.

# Default: "80" (local only)

HTTP_PORT: 8888

# configures where the application root is based. Default: "/"

DASH_URL_BASE_PATHNAME=/transparenzregister/

# Enables basic auth for the application.

# Diabled when one is empty. Default: Disabled

PYTHON_DASH_LOGIN_USERNAME=some-login-to-webgui

PYTHON_DASH_LOGIN_PW=some-pw-to-login-to-webgui

# How often data should be ingested in houres, Default: "4"

PYTHON_INGEST_SCHEDULE=12

# Settings for NER Service

# possible values: "spacy", "company_list", "transformer", Default: "transformer"

PYTHON_NER_METHOD=transformer

# possible values: "text", "title", Default: "text"

PYTHON_NER_DOC=text

# Settings for Sentiment Service

# possible values: "spacy", "transformer", Default: "transformer"

PYTHON_SENTIMENT_METHOD=transformer

# possible values: "text", "title", Default: "text"

PYTHON_SENTIMENT_DOC=text

# Acces to the mongo db

PYTHON_MONGO_USERNAME=username

PYTHON_MONGO_HOST=mongodb

PYTHON_MONGO_PASSWORD=password

PYTHON_MONGO_PORT=27017

PYTHON_MONGO_DATABASE=transparenzregister

# Acces to the postress sql db

PYTHON_POSTGRES_USERNAME=username

PYTHON_POSTGRES_PASSWORD=password

PYTHON_POSTGRES_HOST=postgres-host

PYTHON_POSTGRES_DATABASE=db-name

PYTHON_POSTGRES_PORT=5432

# An overwrite path to an sqlite db, overwrites the POSTGRES section

PYTHON_SQLITE_PATH=PathToSQLite3.db

Local execution / config file

Create a *.json in the root of this repo with the following structure

(values to be replaces by desired config):

Please note that an sqlite entry overwrites the postgres entry.

To use the *.json use the path to it as an argument when using an entrypoint (webserver secrets.json).

{

"sqlite": "path-to-sqlite.db",

"postgres": {

"username": "username",

"password": "password",

"host": "localhost",

"database": "db-name",

"port": 5432

},

"mongo": {

"username": "username",

"password": "password",

"host": "localhost",

"database": "transparenzregister",

"port": 27017

}

}

sqlite vs. postgres

We support both sqlite and postgres because a local db is filled in about 10% of the time the remote db needs to be completed.

Even tough we use the sqlite for testing the connection can't manage multithreading or multiprocessing.

This clashes with the webserver. For production mode use the postgres-db.

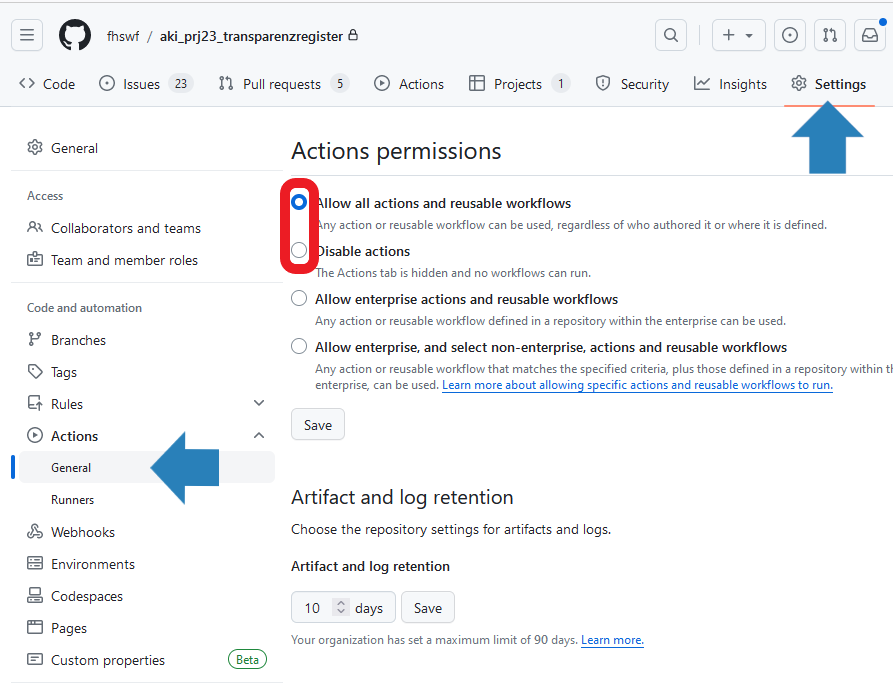

Re-Enable Actions & Dependabot

After the project is over all computation using parts should be turned off.

To enable all the features please enable the GitHub Actions first. The following image shows where the buttons to enable the actions can be found.

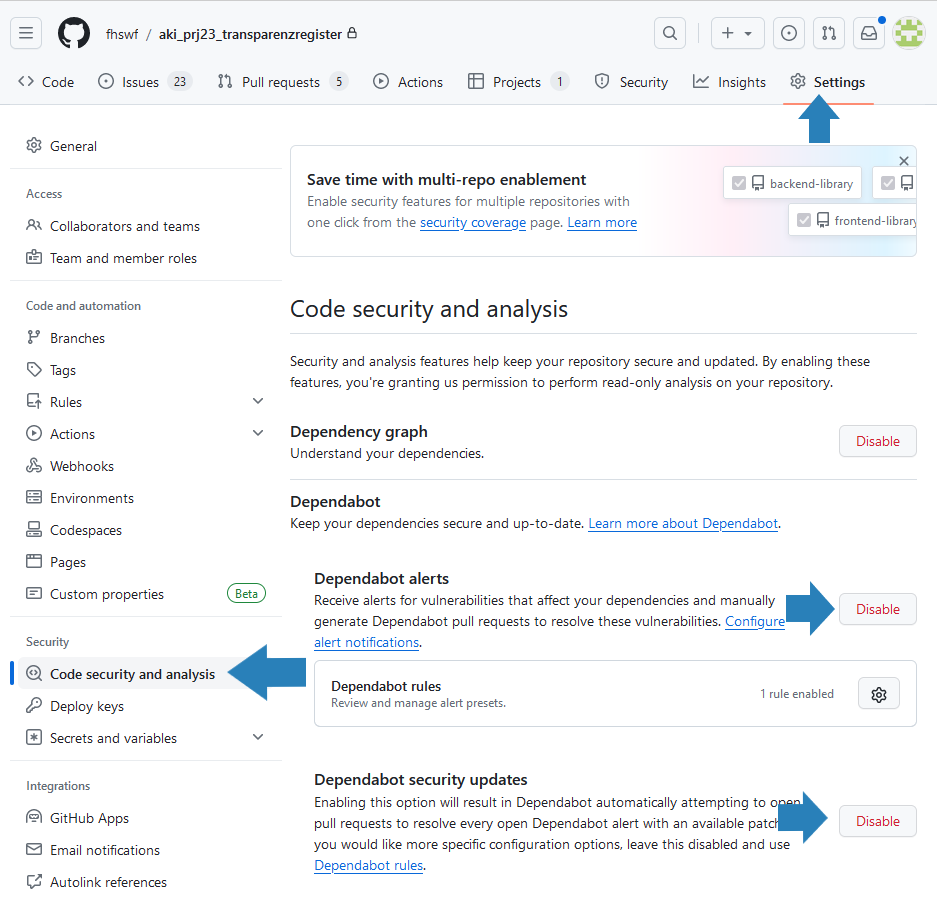

Additionally, it is recommended to enable Dependabot.

Please note that patches are currently only demanded for critical security fixes.

Use poetry update prior to restarting the project to update all the python dependencies.

Note that both security updates and alerts should be enabled.